You can access the full course here: VR Development with Controllers

Table of contents

Part 1

In this course, you’ll learn to develop applications that use the hand-tracked controllers in VR. This means tracking the position and rotation of your controllers and also accessing the buttons so you can do different actions when the buttons are pressed.

What Will We Be Learning?

- Headset and controller tracking

- XR input mapping

- Cross-platform approach

What Platforms Can I Use?

- Oculus Rift

- Oculus GO

- GearVR

- OpenVR (HTC Vive)

- Windows Mixed Reality

Part 2

Camera

In Unity and other 3D game engines, the camera is your view into the 3D world. With VR, there are two cameras (one for each eye), but luckily for us we only need to work with one in Unity.

Types of Experiences

There are two main types of VR experiences.

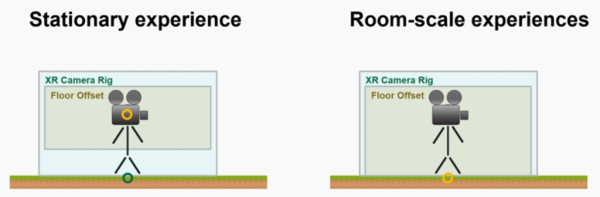

A stationary experience is one where you can look around in VR. This utilizes the 3 axis of rotation to rotate the in-game camera based on the device’s rotation (3 DOF).

A room-scale experience is one where you can look around and move around in VR. This utilizes the 3 axis of rotation as well as the 3 axis of translation. Calculating the movement is different for each headset (6 DOF).

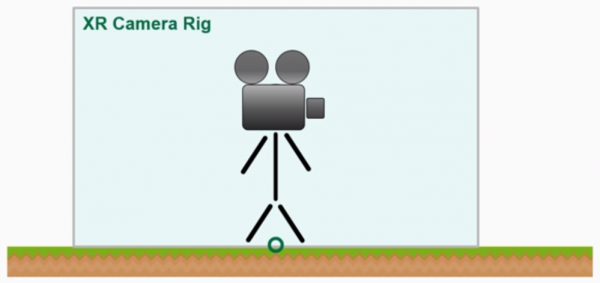

XR Camera Rig

A common practice in Unity is that you would create an empty GameObject called XR Camera Rig and place your camera and hand controllers (if you have them) inside of that object. We do this so that the player is the only one ever affecting the transform properties of the camera. Directly moving or rotating the camera can cause motion sickness and a difference in what the player’s seeing to what they’re physically doing.

Another method (and the one we’ll be using) is to have another empty GameObject inside of the XR Camera Rig called Floor Offset. This will hold the camera and hands. The reason for doing this is that it allows us to change the height and manage the player to a finer detail than the original rig. For a room-scale experience, we would place the Floor Offset origin on the floor as the headset will automatically set the Y position.

Tracked Pose Driver Component

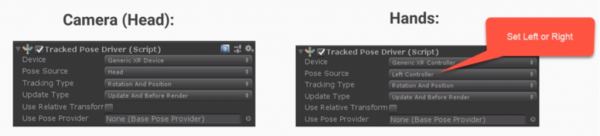

The component we’ll be using to track the head and hands is Unity’s built in TrackedPoseDriver.

Part 3

The next lesson covers the installation of the TrackedPoseDriver component, as it has been removed from the default list of components.

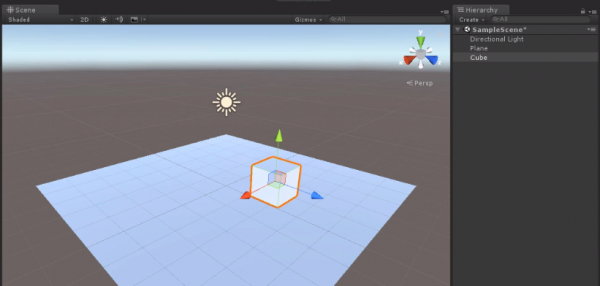

Project Setup

Create a new Unity project (or use the included project files) and delete the main camera. For our quick environment, let’s create a new plane (right click Hierarchy > 3D Object > Plane). Then for something to look at in VR, let’s create a cube and place it off to the side.

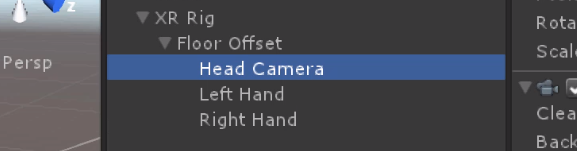

Creating the XR Rig

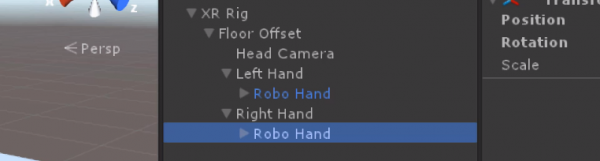

Let’s create the XR Rig we talked about in the previous lesson. Create a new empty GameObject (right click Hierarchy > Create Empty) and call it XR Rig. Inside of that, create another empty object called Floor Offset. Inside of that, create two new empty objects: Left Hand and Right Hand. Finally, create a new Camera (right click Hierarchy > Camera) and call it Head Camera.

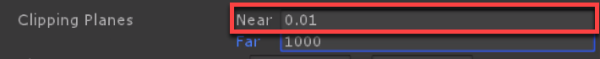

Selecting the camera, we need to change a couple of properties to make the VR experience better.

- Set the Clipping Planes – Near to 0.01

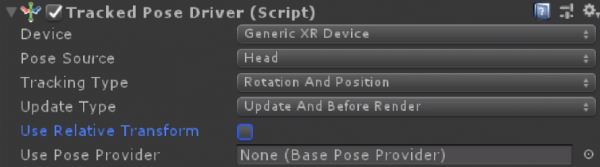

As it is now, the game will work in VR. But if we want a more finely tuned camera, let’s attach the Tracked Pose Driver component.

- Set Device to Generic XR Device

- Set Pose Source to Head

- Disable Use Relative Transform

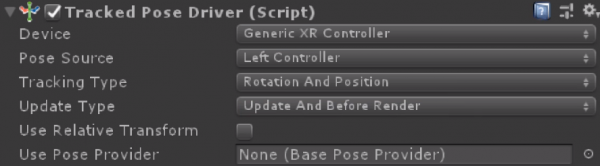

As well as the head, we need a tracked pose driver for both of the hands. Select both hand objects and attach the Tracked Pose Driver.

- Set Device to Generic XR Controller

- Set Pose Source to Left Controller or Right Controller (respective to each controller)

- Disable Use Relative Transform

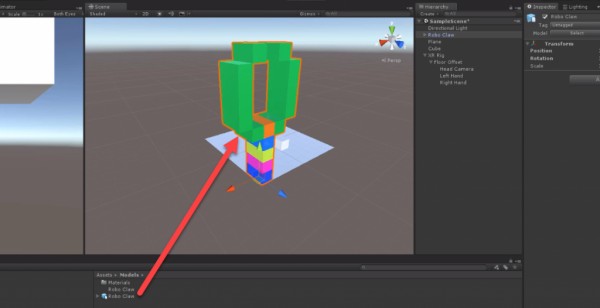

At the moment, we won’t know where or what our hands are, so let’s add some hand models. Drag in the Robo Claw model and then drag on the Robo Claw texture to apply it.

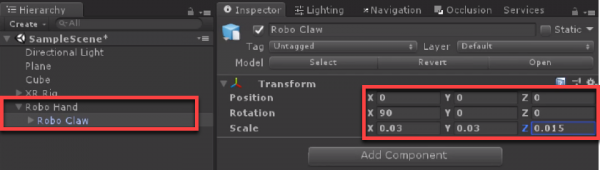

Right now it’s quite large. So let’s create a new empty GameObject and call it Robo Hand. Drag the model in as a child and change some of the transform properties.

- Set the Rotation to 90, 0, 0

- Set the Scale to 0.03, 0.03, 0.015

This will scale and rotate the claw down to the ideal size for us to use. With the Robo Hand, drag this into the Prefabs folder (create on if you need to) to save it as a prefab. Then delete the original in the scene.

Drag the Robo Hand prefab into the scene as a child of the Left Hand (make sure to set its position to 0, 0, 0). Do the same for the Right Hand.

If you have your headset connected, pressing play should allow you to test out your rig.

Transcript 1

Hello and welcome to the course. My name is Pablo Farias Navarro, and I’ll be your instructor. In this course, you’ll learn to develop applications that use the hand-track controllers in virtual reality. That means tracking the position, the rotation of your controller and also accessing the buttons so that you can do different actions when the buttons are pressed. This will work in all of the main platforms, including desktop and mobile.

Our learning goals. You’ll be tracking the position and rotation of your headset and controllers. You’ll be reading the input of your controllers. That means what buttons are being pressed. And all of this will be done with a cross-platform approach. We’ll be using Unity’s natively supported features as much as we can so that everything you build in this course can be used in any of the main virtual reality platforms.

Having said so, we will look in detail into the following platforms: the Oculus Rift, the Oculus Go, the GearVR, OpenVR compatible headsets such as the HTC Vive, and the Windows Mixed Reality platform. So that if you own any of these devices, you’ll get everything up and running with detailed instruction. Our goal at ZENVA is to empower you, the learner, to get the most out of the courses in whichever manner suits you the most. That means, you can watch the videos as many times you like. You can read the summaries that contain screenshots and source code.

You can download the project files and you can use all of the projects and all of the material that we provide in our courses for your own projects. So you can use that in commercial projects, you can put it on GitHub, you can put in your game, or in client work.

We love hearing about what you guys are doing with the skills that we teach so please let us know if you publish an application or a game or if this is helping you in your career. We’ve seen that those plan for their success get the most out of our courses. That means setting times and reminders to watch the lessons and use and practice the skills that you are learning in these courses. Thanks for watching! It is time now to start building cross-platform virtual reality applications.

Transcript 2

The XR camera Rig. XR meaning extended reality that is the continuum between augmented reality and virtual reality is a very important concept that will help you develop these types of applications.

Let’s start with the very basics.

A camera in Unity and other 3D engines is simply a device that captures and displays what’s happening in the game world to your user. In normal 3D applications users can control the camera by using the mouse, the keyboard or sometimes the application itself controls the camera. For example, in an ending sequence. That is an important difference with how things work in virtual reality and also in augmented reality. The user is in control of where the camera is looking at and in fact if there’s also position tracking they are also in control of the position of the camera or at least the relative position of the camera from a certain origin, so you can not impose those things on your user in these types of applications.

Also, in virtual reality you actually have two cameras in the game one for each eye. Luckily for u,s when working in Unity, you normally just work with one camera and the other two cameras are created during runtime, so an important type of extended reality applications are stationary experiences. This is where the position of the camera is being fixed and you have control of over the rotation and also we have room-scale experiences which is where the whole space is being tracked and the position of the floor is known and you can move around.

Now this definition – there’s no standard, because I’ve seen different ways of talking about the same concepts. I have seen the definition of stationary experiences where there is a little bit of movement as well, although the position of the floor is not known.

A common setup in Unity is that you would develop you would create an empty object called the XR Camera Rig. And then you would place your camera and if you have hand truck controllers you would place those as well. If you are on a stationary experience you have to give those a certain height from the floor. If you are on a room-scale experience the height is given by the tracking.

What if you go into a vehicle? I said before that you cannot force the movement or the rotation of the user. Well, you can if you think of in reality now nobody can force you to look at a certain direction and nobody can force you to move, but if you go into a vehicle the vehicle can move, so what you do is you put the whole camera rig inside of the vehicle or inside of the elevator or the aircraft and then that vehicle can indeed move and it can indeed rotate it, but the user is still in control as to what happens inside of the vehicle or inside of the lift.

A better setup is to have the camera rig, but then also a floor offset containing object. This is an hour containing object empty object and the reason why we are doing this is because it is a little bit easier to work with the camera sometimes when the camera is placed at the origin, so we can have this inside object and then have the camera at the origin and have the hand controllers if there’re such at a certain distance from the origin as well. When we have a room-scale experience the origin still needs to go on the floor because like I said before the position of the camera and all the other elements is given by the tracking, whether that’s optical sensors or lighthouses or inside out tracking that is how it works.

The other reason why this approach is preferable is because Unity already provides a script that lets you switch between stationary experience and room-scale experience in the Unity editor, so and that screed uses the floor offset and lets you enter a value for the height, so that is why we will be using this approach. Although the just using the camera rig without offset also works. It just doesn’t give you that flexibility. The way that this will work in Unity which we’ll be seeing in the next lesson is like this.

You have the camera rig just an empty object which contains everything else the camera rig is a ground floor then you have the floor offset, the head left hand and right hand and well we will be using, so this will be covered in a lot of detail I’m only mentioning it here just to give you a hint of what’s coming is the tract post driver component which is a Unity component that can make a game in your scene be tracked by either the headset or the controller, so this is used and works in most main platforms these days.

Which is amazing because it didn’t exist only some time ago, so to summarize what we’ve learned in Extended reality the user controls the camera rotation and the relative position if there’s position tracking relative from some origin. The XR rig is simply a container for your truck devices. That all it is and you can add this floor offset container which can make it easier to work across platforms, or if you want to have your camera placed at the origin.

Lastly, we have the tracked post driver is a Unity component used for XR tracking of different controllers and headsets, so we’ll be using that in the next few videos. Thanks for watching I will see you in the next lesson.

Transcript 3

In this lesson, we are going to create our basic XR camera rig setup, which will work with the headset and hand truck controllers as well. I’ve gone and created a new Unity project, and what I’ll do now is delete the default camera that comes with Unity, just so that we have a clean slate.

Now I’m going to go here and add a plane for the ground. And also I’m going to add a cube, just so that there’s something to look at once we enable virtual reality. Next I want to create the basic XR camera setup that we saw previously, which requires us to have an empty object for the camera rig, an empty object for the floor offset, then, a camera and object for the hands. So, I’m going to go here and create an empty object, which I’m going to rename as XR Rig. Inside of that object, I’m going to create another object for the floor offset. And inside of that, I’m going to create the camera, which will be the head. Rename this to Head Camera. I’m going to create an object for the left hand, and for the right hand. Great, so let’s go now and look at the camera here. So this is a usual camera.

There’s something we need to change. The clipping planes in the camera represent the distance that is rendered, for example this means that you cannot see further than 1000 Unity units, which translates to 1000 meters. So you can change the visibility of the camera. Also, you can change how what’s the closest that you can see. And this might work well for normal 3D games, but in a virtual reality experience, you might want to, let’s say bring your hand controller to your face, so 0.3, which is 30 centimeters, or approximately one foot, is too big of a distance to have as a near clipping plane, because if you bring your hand to your face, you’re not going to see anything.

So what we want is just to use the smallest possible value, which is 0.01, so once you’ve set it to 0 it will default to that minimum value.

The other thing you want to make sure of is that this target eye is set to “both”. So that then, the left and the right eyes will be created for you when you’re running this in virtual reality. Now if you run this in virtual reality, the camera will work, the headset will work, but you don’t have much control of how it’s going to work. So we’re going to add here, a component called the Tracked Pose Driver, which allows you to set up different settings as to how this will be tracked.

So first of all, device, we’re going to leave this as Generic XR Device. That’s what we use for the camera. Pose Source, pose means a position and a rotation. So it’s like a transform, but without the scale. And we want to draw the pose from the head. From the headset. Then, tracking type. Here you can specify whether you want rotation and position tracking. If your headset has six degrees of freedom, this means it supports position tracking, you can still force it to only be rotation. If you want to create an experience, let’s say with 3D photos, where the user can’t really move around or shouldn’t really be able to move around much, you can set that to rotation only.

Then, update type, we’re going to leave this setting, because we want the position to be updated as much as possible, Both when we’re doing calculations in update, in the update method, and the rendering is to be as precise as possible, so we’re going to leave this setting. Now, relative transform. That means that the changes in position are going to be relative to the initial position of the camera. We don’t want that, because remember that we are using an object that is going to lift the camera up when it’s stationary, or that it’s going to be on the ground, so, we are going to be taking care of that ourselves. So we don’t want to have that relative position.

And lastly, we don’t need to put anything here, because that doesn’t apply for this particular case. For our left and right hand, we’re going to take a similar approach, which is to add that pose driver. So let’s start with the left hand here.

For the left hand, we want to set this as a XR controller, and this is fine as a left controller, so we’re going to leave that. Let’s leave this as rotation and position. Same as before, and also no to relative transform, because the position of the controller will be given by the, and also the rotation, will be given by the tracking. Right hand, and also just so you know, this also applies for, let’s say the Oculus Go, or Gear VR, because even though those controllers don’t track position, there is an algorithm that still estimates the position based on the model of an arm, skeleton, so you want to leave this option like so.

And same thing for right, we’re going to change here to XR controller, and we’re going to change the source to right controller and uncheck relative transform. So we are now all set. Our basic setup is ready, but there’s one thing that’s missing.

We need to have some model for our hands. You could go here and add a cube, a small cube, but what I’m going to do is what you can do as well, is find a zip file named Existing Scripts and Models, and from that file, I’m going to drag the models folder into my project, and inside of that folder, there is this 3D model here of a robotic arm. And the other file here is a PNG, it’s the material. There we go.

Now, this thing is obviously huge, it needs to be real size for us to use it in a hand. Also, the hand that you use needs to point towards the forward position. So that’s the other thing we need to change. What we can easily do here is just create an empty object, which will contain that model, so I’m going to call this “Robo Hand” and I’m going to move this 3D model in here, and then we can do all the changes to the transform in the robotic claw, but leave the robotic hand as it is.

So, and then, you start inside of our controllers. So what I’m going to do here is, firstly I’m going to rotate this model by 90 degrees on X, so that it points to the right direction. I’m going to set its position to the origin, and I’m going to change the scale so that it is a good size, which I already know that this is the right scale here. So when you are using your own models, you either trial and error, or use proper measurement, to know exactly how big they need to be. If it looks too big, you just reduce it until you get to a right size.

Great, so now it’s much smaller, and what I’ll do is save this as a prefab, so that we can then reuse this easily. So I’m going to create a folder here name prefabs, and bring this robo hand into my folder. And I’m also going to set this transform here to zero as well and apply that, so I’m going to delete this object now. So, next I’m going to drag my prefab to both the left hand, and the right hand.

Great, so we have this setup now, we have everything we needed. The XR rig, the floor setup, the head, both hands, and what I’m going to do now is demonstrate how this works in virtual reality. I’m going to be using an Oculus Rift, and I’m not going to cover it just yet how to set that up. I’m only going to just show you that this is working. There are in the course lessons that you will go into after we cover input actually, that cover every single one of the different headsets.

In those lessons, I will show you how to configure all of the main headsets, so that then you can concentrate on the headsets that you own, or that you are interested in. Let’s go now into virtual reality, and see this working. Okay, so as you can see, I’m in virtual reality and both controllers are working fine, and I can look around.

What we’ll do in the next lesson is address the floor offset script, so that we can set our application to work as a stationary application, and also as a room scale experience, provided that is supported by your headset. So that’s all for this lesson. I will see you in the next video.

Interested in continuing? Check out the full VR Development with Controllers course, which is part of our Virtual Reality Mini-Degree.